Tools Suck

Let me be straight with you. I hate most tools. Software developers tend to write tools for themselves, which makes sense. But then, they just publish the highly customized thing they use. Often times, because they're software developers, there are unaccounted for dependencies, a mess of custom directories, and even arbitrary naming conventions.

What results is stuff that's not--gasp--user friendly. In fact, this is kind of a mantra in the software world. "We're not user friendly and we like it that way". That's all fine and dandy if your purpose is to enhance your job security. But that's not how you should build something for other people to use (known as a tool).

Since I hate most tools, the result is that, I have to do a lot of things by hand until I find the right tool for the job. In some cases, I work with "the best tool for now" but most of the time I just do things by hand because it's less tedious than learning and re-configuring my system for a tool that will be broken next week anyway.

What is High Availability?

In this context, let's talk about "High Availability". Often called HA by system administrators, high availability is exactly what it purports to be. It's making something available as much as possible. A simple context to understand this is with the internet. If this blog was hosted on a single server and that server crashed (for whatever reason), then you wouldn't be able to get to it anymore. In a High Availability situation, there would be multiple servers hosting the same blog, and if one died (or was just down for updates) you would still see the blog because another server would be doing the job of hosting it.

As you can imagine, this comes with a cost. Namely, more servers. If you wanted our computer to be high availability so you could keep working if it crashed, you'd need a second computer just like it, running all the time, ready for you to switch to it if yours died. So, high availability is expensive.

Some system administrators won't work without it. In fact, you'll see this all over the internet and in the home lab communities. People run 2 ethernet cables to every network connected device in their house. Some will run 2 routers. People even run two pihole DNS servers (it's literally just there to reduce ads!). Don't misinterpret, having backups is important, and knowing how to set up a highly available service is valuable (and the topic of this blog post). But not everything needs to be highly available--not everything needs a backup either to be honest.

This may come as a shock to those sysadmins, but guess what? Very few things need to be highly available. In fact, the more things you require to be HA, the more things that have to be available for those things to work. If you want your server to be highly available, you don't just need a second server. You also need a second power outlet, probably should put that on a second battery backup too, and maybe even a second circuit, or maybe located in a different physical facility to ensure against fire/weather issues, maybe a different country in case politics gets out of hand--can we get it in space? See what I mean? Perfect availability is a pipe dream.

When should we HA something?

So keep it simple. What do you use it for? When do you use it? What happens if you can't use it? Or, in the mantra of the miliary: What do we have and what do we need? If your internet goes down, it might mess up your Netflix binge, but will it wreck your life? Oh wait, you work from home? Maybe a bigger deal then. Fire alarm? Probably more important. Thermostat? Less so, but probably key in the winter. Can you see the priority list forming? But we're not talking about the four walls. We're talking about software.

What software actually keeps you going day to day? In my case, I run a software business, and need my website to be "highly available" so that any time a client wants to, they can visit it and learn about my company. Now, I can offload this to another tool, and do in most cases. I'll use the cloud to manage the availability, and just put my stuff on a server on a cloud service such as AWS. That's a pretty good tool.

BAM. We're done. High Availability. No more effort needed.

Realistically, it's much more expensive to run things in the cloud. So I run a lot of stuff myself. One example of a tool that you might think should be highly available, but probably doesn't need to be is a build server. If my builds don't run, everything stays running, it just doesn't update. This isn't the end of the world, and my software cycle isn't moving so quickly that I can't take the time to fix my build server if it goes down.

On the other hand, my reverse proxy is crucial. It not only handles many websites for my clients (which they would be very mad if they went down), but also allows me to have a stable DNS even if my IP address changes. I really would like to invest in running a second one.

Cut to the chase. How do we do it?

So because there are so many bad tools out there, I'm going to share with you a set of good tools that I have found to be easy to set up, reliable, and not prone to user-error. Ready?

cron

rsync

keepalived

Why these three tools? because they're all simple linux CLI interfaces. The developers didn't get super fancy and try to overdo it. The interfaces are stable, as they've been around a while, and when tied together they're fully automatic.

rsync keeps the servers in sync

rsnync is a very simple tool for keeping two folders the same. When I'm running two servers, every time I change one, I don't want to have to change the other. So I run rsync on a regular interval to keep the servers in sync. Here's what it looks like to use rsync to keep my nginx configurations all synced between the two servers, so the reverse proxy acts the same across the board.

rsync -av /etc/nginx/ <user>@<server ip>:/etc/nginx

You can find more about rsync here.

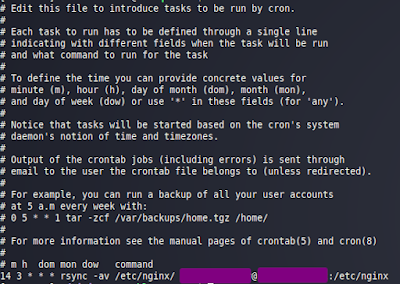

cron does the automation

Of these 3, cron's role is simple. It runs rsync regularly. That's it. In my case, nightly is enough. I don't add new websites behind my reverse proxy all that often, so nightly is enough to keep things up to date. Here's my crontab for the above script to run nightly:

A decent tutorial on cron is

here.

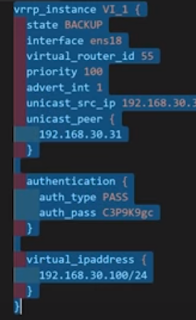

keepalived handles failover

keepalived is a simple tool to create a pool of servers that take over for each other, should one fail. Well, it doesn't create the pool. It adds the server to the pool. So you run keepalived on each server you want to be part of that pool, using the same configuration (that helps identify the pool) and they do just that. Setting up keepalived just requires a configuration file /etc/keepalived/keepalived.conf which looks something like this:

You can see a lot of details about keepalived here. I also recommend this video for it.

Have we missed anything? Well, we could go beyond, moving the server off-site. We could certainly look at syncing other directories (but this works for now). I think we're doing pretty well. I will be improving my scripts in the coming days, but this is all working and didn't require 30 hours of mastering tool configurations. I'm pretty happy with it.

Comments

Post a Comment